Overview

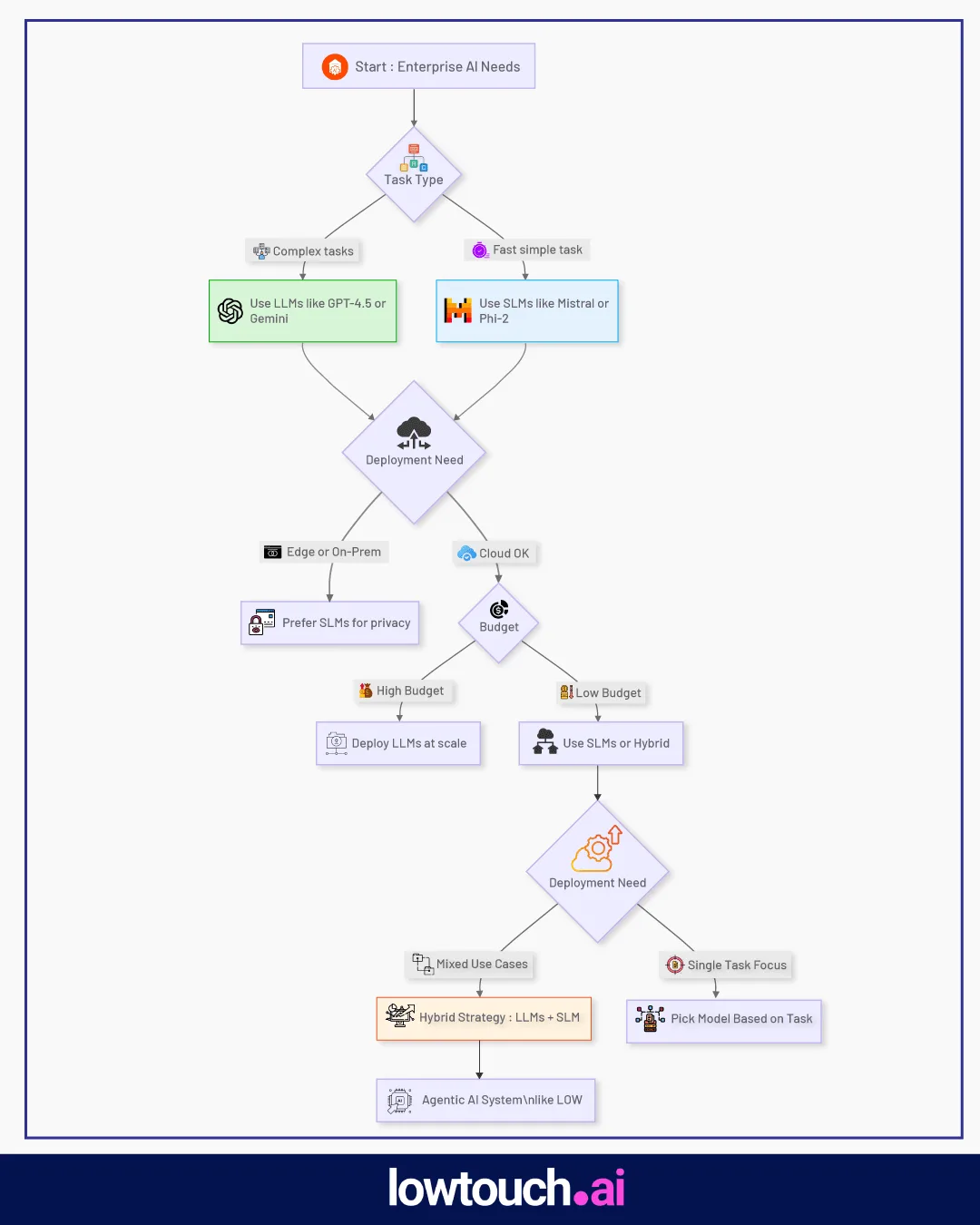

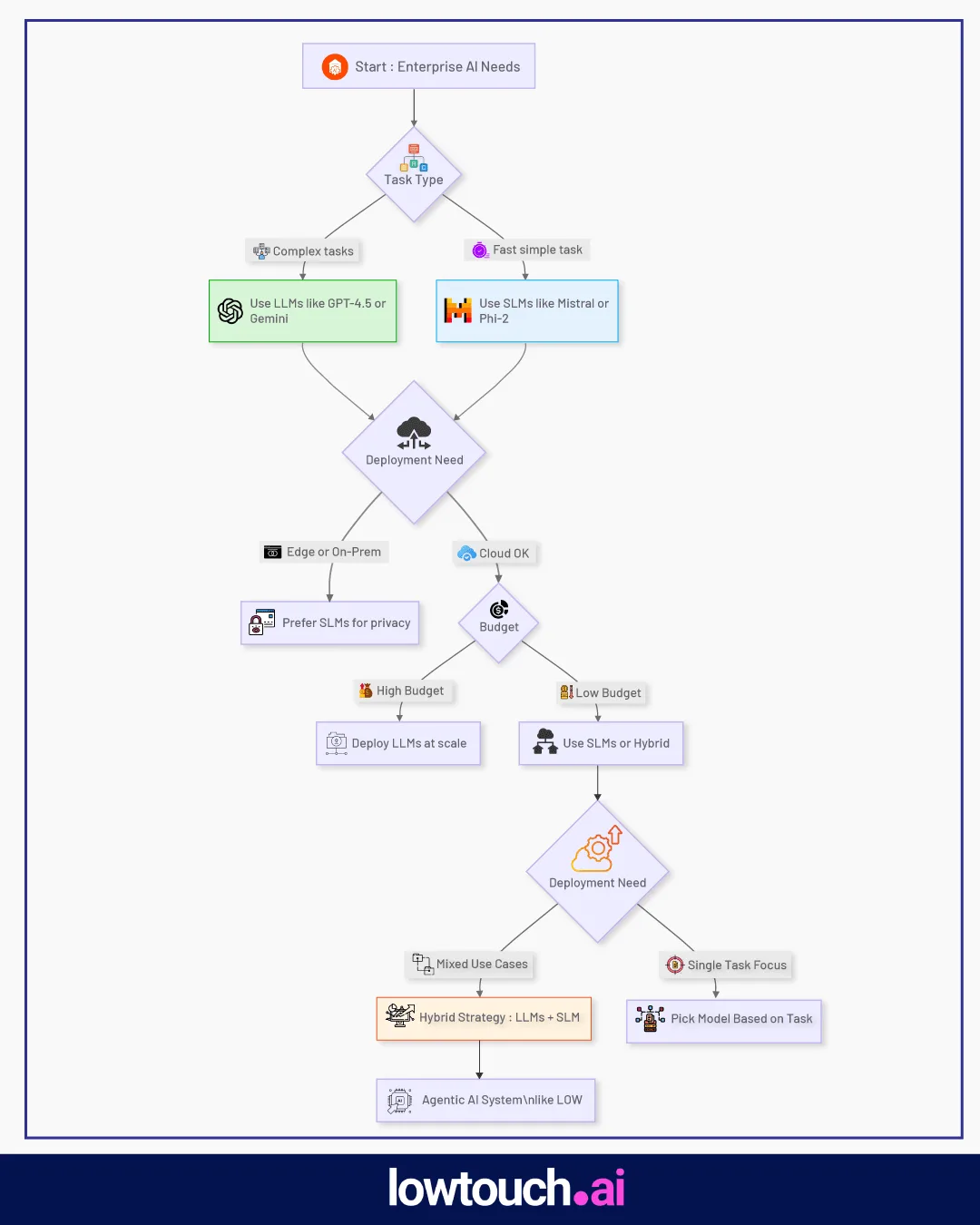

In the fast-evolving world of artificial intelligence, enterprises face a critical decision: which language model best suits their needs? Large Language Models (LLMs) and Small Language Models (SLMs) offer distinct advantages, and choosing the right one—or a combination of both—can significantly impact performance, cost, and scalability. This blog post, crafted for CTOs, AI architects, and innovation leads, explores the differences between LLMs and SLMs, their enterprise use cases, and how a hybrid approach can optimize AI deployments. With insights from Lowtouch.ai, we’ll guide you through making informed decisions for your business.

What Are LLMs and SLMs?

Large Language Models (LLMs)

LLMs are AI models with billions of parameters, trained on massive datasets to understand and generate human-like text. They excel in complex reasoning, large context handling, and multimodal tasks. Examples in 2025 include:

- GPT-4.5 (OpenAI): Advanced reasoning and multimodal capabilities.

- Claude 4 Sonnet (Anthropic): Efficient with a 200K-token context window.

- Gemini 2.5 (Google): Handles text, images, and code with a 1M-token context.

LLMs are powerful but resource-intensive, often requiring cloud infrastructure or high-end GPUs.

Small Language Models (SLMs)

SLMs are compact models with fewer parameters, designed for efficiency and specific tasks. They require less computational power and are ideal for low-latency, cost-effective applications. Examples include:

- Mistral Small 3 (Mistral AI): 24 billion parameters, optimized for low-latency tasks.

- Phi-2 (Microsoft): Lightweight for edge devices.

- DistilGPT and TinyLlama: Open-source models for narrow, efficient tasks.

SLMs are perfect for scenarios prioritizing speed, cost, and on-premise deployment.

Core Differences: Speed, Cost, Accuracy, Footprint

Understanding the trade-offs between LLMs and SLMs is key to selecting the right model. Here’s a detailed comparison:

| Aspect |

LLMs |

SLMs |

| Model Size |

Billions of parameters (e.g., 671B for DeepSeek-R1) |

Fewer parameters (e.g., 24B for Mistral Small 3) |

| Compute Requirements |

High (cloud or high-end GPUs) |

Low (single GPU or edge devices) |

| Performance |

Superior for complex, multimodal tasks |

High for specific, low-latency tasks |

| Cost |

High (training costs in billions) |

Cost-effective (30x cheaper than some LLMs) |

| Context Length |

Large (e.g., 1M tokens for Gemini 2.5) |

Smaller but sufficient for many tasks |

| Deployment |

Often cloud-based, privacy concerns |

On-premise or edge, better privacy control |

- Model Size and Compute Requirements: LLMs like DeepSeek-R1 (671B parameters) demand significant resources, while SLMs like Mistral Small 3 (24B parameters) can run on a single GPU or even a MacBook with 32GB RAM.

- Performance vs. Latency: LLMs excel in complex tasks but may have higher latency. SLMs, like Mistral Small 3, process up to 150 tokens/second, 3x faster than some LLMs like Llama 3.3 70B.

- Cost to Run and Fine-Tune: Training LLMs can cost billions, though models like DeepSeek-R1 have reduced costs significantly. SLMs are far more cost-effective, making them attractive for budget-conscious enterprises.

- Memory and Context Length: LLMs handle massive context windows (e.g., Gemini 2.5’s 1M tokens), while SLMs have smaller but adequate context lengths for many tasks.

- Privacy and On-Prem Deployment: SLMs are easier to deploy on-premise or on edge devices, ensuring compliance with regulations like GDPR and HIPAA, unlike LLMs, which often rely on cloud infrastructure.

When to Use LLMs: Enterprise Use Cases

LLMs are ideal for tasks requiring deep reasoning, large context understanding, or multimodal processing. Here are key enterprise applications:

Complex Reasoning Tasks

- Finance: Fraud detection and compliance monitoring, analyzing vast datasets for patterns.

- Healthcare: Multimodal diagnostics, combining medical scans with textual data.

- Research: Scientific simulations and genomic data analysis, leveraging models like DeepSeek-R1.

Retrieval-Augmented Generation (RAG) Pipelines

- LLMs integrate with proprietary enterprise data (e.g., PII, financial records) for accurate, context-aware responses, as seen with GPT-4.5.

Agent Orchestration

- Coordinating multiple AI agents for complex workflows, such as automating back-office functions or customer interactions.

Multimodal Applications:

- Models like Gemini 2.5 process text, images, and code, enabling comprehensive solutions for content generation and problem-solving.

When to Use SLMs: Enterprise Use Cases

SLMs are best for scenarios where efficiency, speed, and cost are critical. Key use cases include:

- **Fast Responses:**Customer support chatbots and virtual assistants, where low latency is essential (e.g., Mistral Small 3 for real-time interactions).

- Edge/On-Device Use: Real-time data processing on IoT devices or mobile apps, leveraging models like Phi-2.

- **Cost-Effective Tasks:**Handling repetitive tasks like data summarization or basic customer queries, reducing operational costs.

- **Internal Data Compliance:**On-premise deployment ensures compliance with strict regulations, making SLMs ideal for healthcare and finance.

When to Choose LLMs vs. SLMs

The choice between LLMs and SLMs depends on several factors:

1. Business Size

- Large Enterprises: Can afford LLMs for their comprehensive capabilities, ideal for complex, cross-functional tasks.

- Small to Medium Enterprises: Prefer SLMs for cost-effectiveness and ease of deployment, especially for startups or resource-constrained businesses.

2. Type of Workflows

- Finance Automation: LLMs for fraud detection and compliance; SLMs for transaction processing.

- Sales Operations: SLMs for quick customer interactions; LLMs for in-depth market analysis.

- Customer Support: SLMs for scalable, low-latency responses; LLMs for complex query resolution.

3. Regulatory/Privacy Constraints

- Industries like healthcare and finance, with strict data privacy requirements, may favor SLMs for on-premise deployment to ensure compliance with GDPR or HIPAA.

4. Budget and Inference Speed Requirements

- High budgets support LLMs for superior performance, while tight budgets or low-latency needs favor SLMs.

SLM+LLM Hybrid Strategy in Agentic AI Systems

A hybrid approach combining LLMs and SLMs offers the best of both worlds, optimizing performance and cost. For example:

LLMs handle complex reasoning and orchestration, such as managing multiple agents or processing large datasets.

SLMs manage high-frequency, low-latency tasks like real-time customer interactions or edge computing.

This strategy is particularly effective in agentic AI systems, where tasks are dynamically assigned to the most suitable model. Lowtouch.ai, for instance, builds modular AI systems that seamlessly integrate LLMs and SLMs, ensuring enterprises achieve the right balance of power and efficiency.

Lowtouch.ai’s Perspective: Matching Models to Business Workflows

At Lowtouch.ai, we believe that no single model fits all enterprise needs. Our platform is designed to match the right model to the right function:

- Complex Tasks: Leverage LLMs like GPT-4.5 or Claude 4 Sonnet for deep reasoning and large context understanding.

- Efficient Tasks: Use SLMs like Mistral Small 3 for fast, cost-effective solutions, especially in edge or on-device scenarios.

Our agent stacks are tailored to deliver optimal performance, whether automating finance operations, enhancing customer support, or driving sales efficiency. By understanding your workflows, we ensure the best model is selected for each task.

Conclusion

Choosing between LLMs and SLMs—or combining them in a hybrid approach—depends on your enterprise’s specific needs, from task complexity to budget and regulatory constraints. LLMs offer unmatched power for complex tasks, while SLMs provide efficiency and flexibility for specific applications. A hybrid strategy, as implemented by Lowtouch.ai, can maximize both performance and cost-effectiveness.

Ready to optimize your AI strategy? Explore Lowtouch.ai’s agent stacks or book a demo to see how we can tailor LLMs and SLMs to your business needs.